Google indexing use case.

The Google indexing Application Programming Interface permits site owners to notify Google when pages are added or updated. When webmaster use these API, Google will prioritise submitted URLs to be crawled and indexed above others. Normally from web interface of Google Search Console we are limited to only 10 submission a day, so Python solution below is nice to have use case for a webmasters. Using Google Indexing API you can submit 200 URLs at once, which is really greate. You can also submit a request to Google to increase this quota also.

Python Knowledge Base: Make coding great again.

- Updated:

2026-05-21 by Andrey BRATUS, Senior Data Analyst.

Before starting the script below some steps have to be taken:

Go to Google developer console and create a new project.

Create a service account.

Create API JSON Keys.

Enable Indexing API.

Go to Webmaster Center and Give Owner Status to the Service Account.

Send Batch Requests to the Indexing API with Python and don't forget to insert your actual data (JSON key, URLs...).

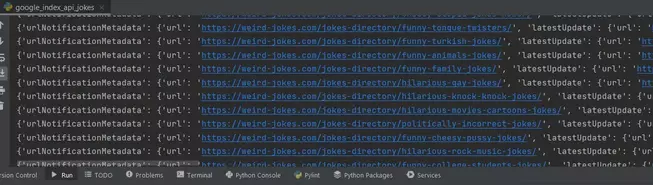

Google Indexing API bot Python code:

from oauth2client.service_account import ServiceAccountCredentials

from googleapiclient.discovery import build

from googleapiclient.http import BatchHttpRequest

import httplib2

import json

requests = {

'https://python-code.pro/':'URL_UPDATED',

'https://python-code.pro/webdirectorypro/':'URL_UPDATED'

}

JSON_KEY_FILE = "credentials.json"

SCOPES = [ "https://www.googleapis.com/auth/indexing" ]

ENDPOINT = "https://indexing.googleapis.com/v3/urlNotifications:publish"

# Authorize credentials

credentials = ServiceAccountCredentials.from_json_keyfile_name(JSON_KEY_FILE, scopes=SCOPES)

http = credentials.authorize(httplib2.Http())

# Build service

service = build('indexing', 'v3', credentials=credentials)

def insert_event(request_id, response, exception):

if exception is not None:

print(exception)

else:

print(response)

batch = service.new_batch_http_request(callback=insert_event)

for url, api_type in requests.items():

batch.add(service.urlNotifications().publish(

body={"url": url, "type": api_type}))

batch.execute()